I’ve been helping a team at Asynchrony improve using blocker clustering, a technique popularized by Klaus Leopold and Troy Magennis (presentation, blog post) that leverages a kanban system to identify and quantify the things that block work from flowing. It’s premised on the idea that blockers are not isolated events but have systematic causes, and that by clustering them by cause (and quantifying their cost), we can improve our work and make delivery more predictable.

I’ve been helping a team at Asynchrony improve using blocker clustering, a technique popularized by Klaus Leopold and Troy Magennis (presentation, blog post) that leverages a kanban system to identify and quantify the things that block work from flowing. It’s premised on the idea that blockers are not isolated events but have systematic causes, and that by clustering them by cause (and quantifying their cost), we can improve our work and make delivery more predictable.

The team recently concluded a four-week period in which they collected blocker data. At the outset of the experiment, here’s what I asked a couple of the team leaders to do:

- Talk with your teammates about the experiment

- Define “block” for your team

- Minimally instrument your kanban system to gather data, including the block reason and duration

The first two were relatively simple: The team was up for it, and they defined “blocker” as anything that prevented someone from doing work if he had wanted to. “Instrumenting the system” wasn’t as easy as it could’ve been, because the team uses a poorly implemented Jira instance, so they went outside the system and used post-it notes on a physical wall. They then kept a spreadsheet with additional data (duration, reason) to tie the blockers back to their Jira cards.

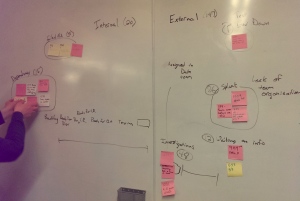

Over the next four weeks, the team collected 19 blockers, placing each post-it note on the wall in either an “internal” (caused by them) or “external” (caused by something outside the team, including customer and dependent systems) column. We then gathered in a conference room to convene a blocker-analysis session to:

- further cluster the blockers into more discrete categories

- calculate how many days of delay (the enemy of flow!) the blockers caused

- root-cause the internal categories

- find out where to focus improvement efforts

The analysis session was eye-opening. We started with the two main columns (internal, external) and as we quickly discussed each blocker, sub-categories, such as “dependency” and “waiting on info” emerged. Within minutes, we were able to see — and quantify the delay cost — of the team’s most egregious blockers.

Some insights that the team came away with:

Some insights that the team came away with:

-

internal blockers caused 20 days worth of delay

-

external blockers caused 147 days worth of delay

- the biggest blocker cluster accounted for 86 days of delay

That biggest blocker cluster now allows the team to have a conversation with the customer that goes something like this: “Over a four-week period, we had three blockers in this area. If this continues, that means you have a 75% chance each week of creating a blocker that costs you an average of 29 days. Is this acceptable to you?”

Ultimately, it may indeed be acceptable. But the customer is now aware of the approximate cost of problems and can manage risk in an informed way.

For the internal blockers, we conducted a root-cause analysis (using fishbone technique, though I’ll admit my “fish” leaves something to be desired!). So the team can go forward to address both external blockers (through a conversation with the customer) and the internal blockers (through their decisions).

For the internal blockers, we conducted a root-cause analysis (using fishbone technique, though I’ll admit my “fish” leaves something to be desired!). So the team can go forward to address both external blockers (through a conversation with the customer) and the internal blockers (through their decisions).

Other lessons learned:

- Some blockers turned out to be simply time spent chasing information about backlog items rather than true blockers of committed work, so the team added “… for committed work” to their blocker definition. (It’s important to understand your commitment point.)

- Depending on how you want to address blockers, you might choose to sort them differently. For example, the team considered sorting its external blockers not by source but by which customer contact was responsible.

Reblogged this on An exercise in herding cats and commented:

I have just heard of this for the first time today and I think it is such a good idea. I am a firm believer that metrics can be used to help and this is a great example of where focused metrics can show the true impact of what might otherwise be perceived as a minor problem.

I also don’t see this as limited to KanBan either, it would work just as well on a Scrum Board, and a moment or two identifying the impact of impediments is time well spent.

Can I also add that impediments are not limited to blockers: extra work, anything slowing you down, lack of tools or other resources etc also counts as an impediment.